I invented the world's fastest basis pursuit denoising (BPDN) solver for very large, very sparse problems, the in-crowd algorithm. Sensory perception involves harmonizing expectations about the real world with incoming data. There are millions of possible configurations of the real world that would all give rise to exactly the stimulus experience you are experiencing now. Only one of them is true; that is the one you would like to perceive. Occam's razor suggests that the simplest explanation consistent with the data is usually the best. However, finding the simplest possible explanation for a problem can be computationally challenging.

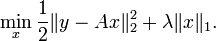

Engineers tackle the problem of how to determine the simplest possible set of beliefs that are consistent with incoming data by solving a special kind of regression problem: one that minimizes both the complexity of the beliefs and the disagreement between beliefs and data. One very popular method for applying this mathematical Occam's razor is BPDN, which is defined as solving the following problem:

BPDN explains the incoming signal (by lowering the first term) in the simplest possible way (by minimizing the second term). The free parameter "lambda" controls the relative importance if signal fidelity and simplicity. The in-crowd algorithm was devised to solve BPDN while I was trying to interpret data from an array of angle-sensitive pixels where I suspected the sources were sparse. MATLAB had built-in routines for solving BPDN, but on our problems the built-in function would take years. Brains solve sensory problems hierarchically, with slower communication between sensory areas than within them. In a 2011 publication I show a theorem that splitting problems hierarchically can lead to exact BPDN solutions, and I showed that algorithmically it's faster to split active explanations of incoming data from inactive, potential explanations. Here is a MATLAB implementation of the in-crowd algorithm. The in-crowd algorithm is currently the fastest solver of BPDN on large, sparse problems like the ones brains solve.

Acetylcholine is a neurochemical that modulates the effective strength of bottom-up sensory data with top-down expectations. Acetylcholine concentration is a single free parameter altering perception that seems to serve the same function as lambda in BPDN. My current research has to do with finding the relationship between acetylcholine concentration and lambda, showing that algorithmic considerations can predict features of neurobiology and provide additional means of investigation neurobiology.

To investigate this relationship, I've started doing cross-species experiments in humans and rats examining perceptual hysteresis and how it shifts with task demands, acetylcholine function, and normal aging.

Morse code assigns shorter symbols to more common letters and phrases (a warm 73 to those in the know) because we want to be able to communicate quickly. The coding scheme used by telegraphers minimizes (or at least reduces) the expected time it takes to send typical messages.

On a similar note, firing action potentials in the brain is metabolically expensive. If there is a coding scheme that delivers the full message without requiring excessively many action potentials, it's likely that evolution has converged towards that coding scheme.

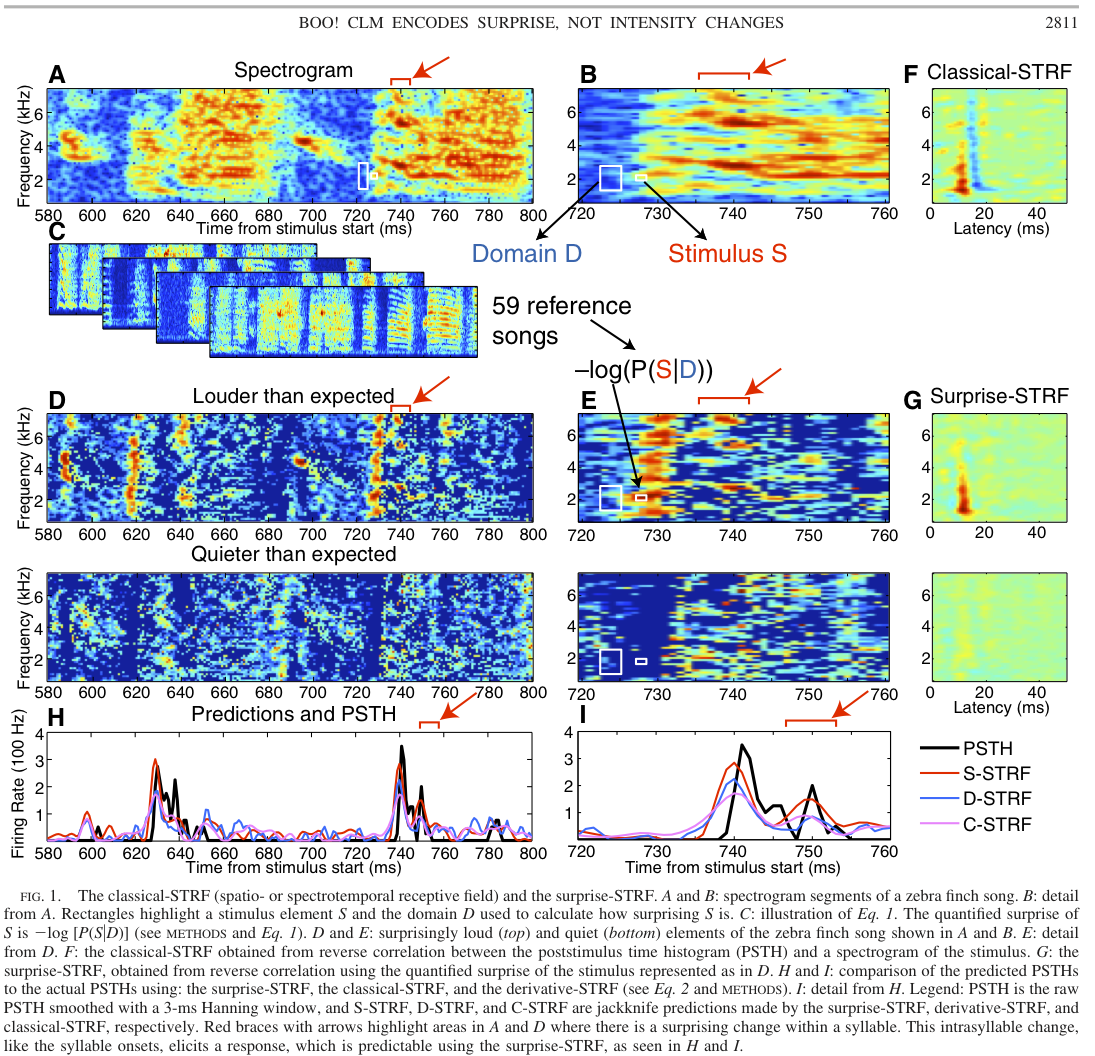

In a 2008 paper, I showed that information theory can explain more of the sensory code of the auditory forebrain of the zebra finch than assuming the auditory areas relate either stimulus intensity or intensity changes. I did this by first discovering which features of birdsong are the most predictable (see figure below), and assuming the auditory code found a way to encode these features with few spikes. This study allowed us to understand neurons in the secondary forebrain area CLM about as well as we understand neurons in the auditory midbrain area MLd. A good analogy with the visual system is if we invented a technique that rendered neurons in area V4 about as predictable as our best models of the LGN.

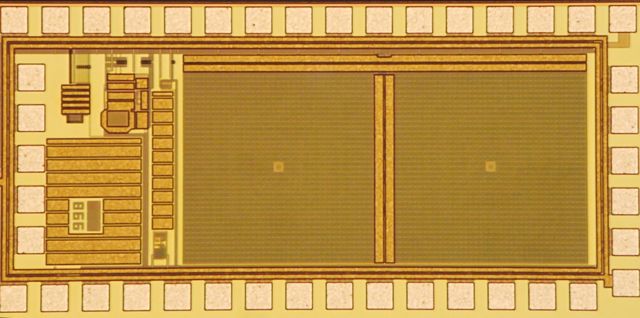

The planar Fourier capture array (PFCA) is the world's smallest camera. It is built out of an array of angle sensitive pixels, each of which report one component of the Fourier transform of the far-away scene. I designed the first prototype, and lead the team that built and tested it. Here is photo of our first prototype:

Using just the Fourier information captured by the PFCA, we can reconstruct low-resolution images of the far-away scene, like this one:

I have developed MATLAB software that simulates ASPs. To build ASPs, I wrote my own CAD software, not available for download (sorry - you'll have to hire me for that!).

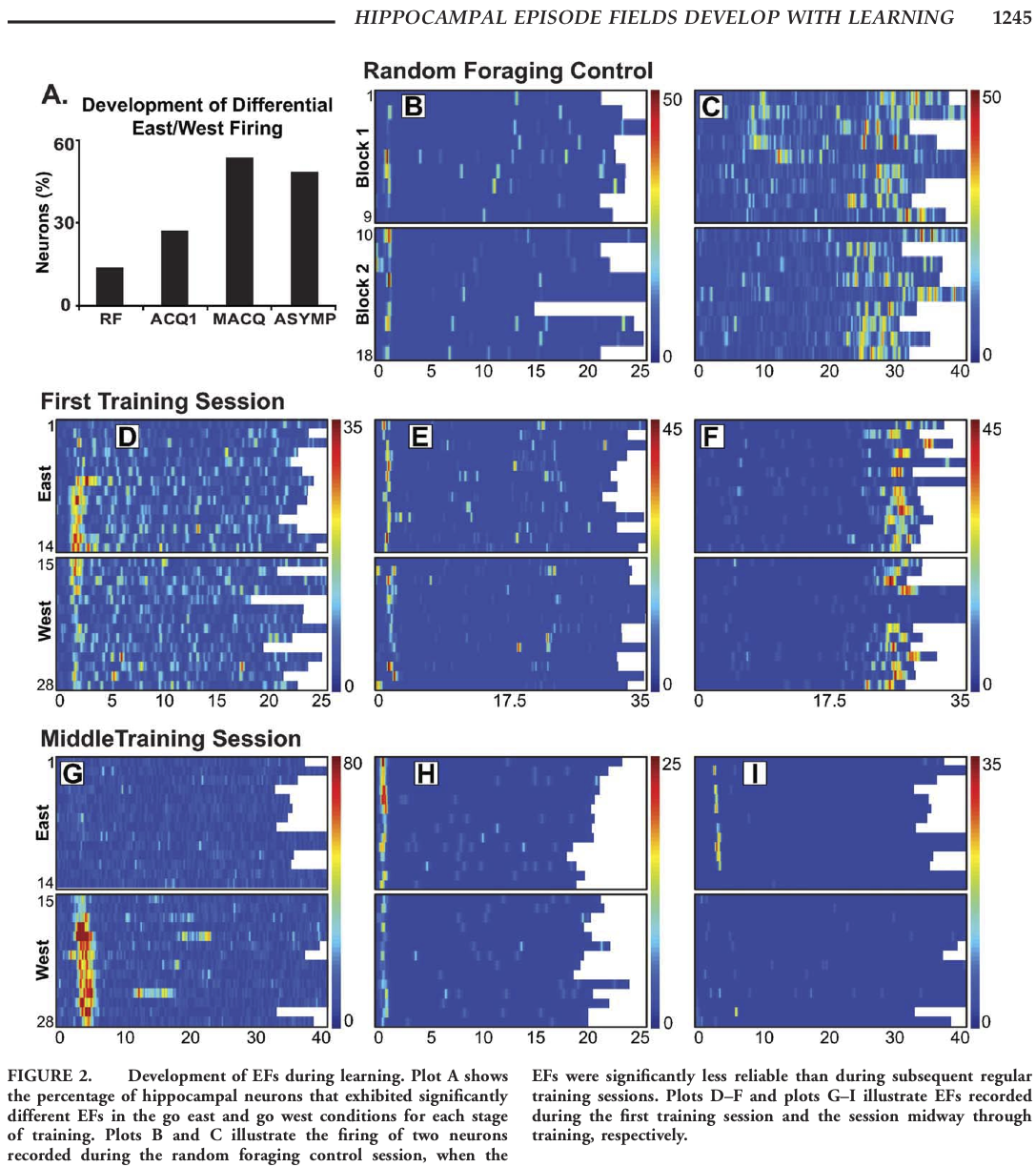

In 2011 I published a paper showing how certain timekeeper neurons in the rat hippocampus fire at specific delays relative to the inter-trial interval time. Strings of neurons with so called "episode fields" or "time fields" fire in a stereotyped order. Moreover, when rat's goal begins to influence which strings become active as the rat begins to learn the task. The figure below reveals the development of differential East/West firing as the rat learns the task.