RESEARCH INTERESTS

- Non-Linear Optimization

- Gradient Based Algorithms

- Sequential Unconstrained Minimization Technique (SUMT):

Penalty Method, Log-Barrier Method, Augmented Lagrange Multipler (ALM) Method

- Direct Method:

Squential Quadratic Programming (SQP) -- Active-Set & Gradient-Projection

- Zeroth-Order Evolutionary Algorithm

Genetic Alogorithm

- Maratos Effects

Stagnation in optimization when steps give rise to a quadratic convergence rate but cause an increase both in the objective function value and the constraint norm.

- Optimization and Mathemtical Programming Applications

- Research Programs: SNOPT(Stanford), MMA(KTH)

- Commercial Programs: LINGO-LINDO, MPL, Crystal Ball, Matlab Optimization Toolbox

- Sensitivity Analysis and Numerical Gradient Calculation

- Finite Difference (Forward- & Central-Step)

Relative Finite Step Size

- Complex Step

Imaginary Step Size

- Semi-Analytical Adjoint Sensitivity Calculation

By using the adjoint sensitivity calculation, the computational cost for gradient of solver based function are obtained with only a single solution loop from solver.

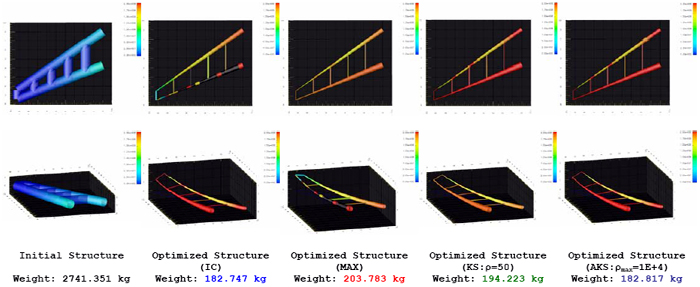

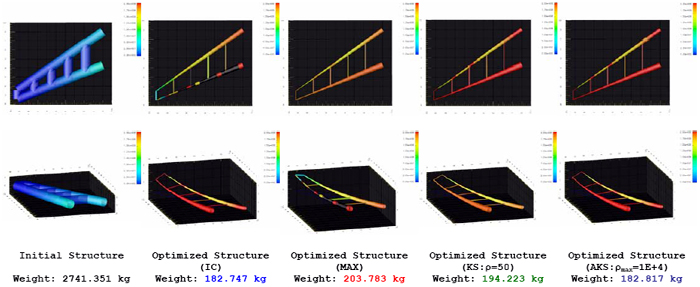

- Constraint Aggregation

- Researched Methods

- Maximum Constraint (MAX)

- Kressielmeier-Steinhauser (KS) Function

- Adaptive Kressielmeier-Steinhauser (AKS) Function

- Compared performance of different constraint handling schemes

- Studied the effect of using constraint aggregation in optimization process

- Derived an adaptive approach to ensure the optimizer can access complete feasible region

|